Neural Networks > Introduction to Neural Networks

Neural Networks

The brain contains more than 80 billion cells — called neurons — which exchange information with one another via small pulses of electricity.

One neuron doesn't talk directly with all 80 billion others. Rather, they are connected into structures that perform specialized functions. These structures are biological neural networks.

The human brain is too complex to discuss in much detail here, but we don't need to know all the details to land on its most important characteristic — “Brains learn from experience.”

Each time you learn a new game, a dance, or a mathematical skill, neurons in your brain strengthen their lines of communication with some neurons and prune their connections to others. The structure of a neural network evolves as you gain new abilities.

Artificial Neural Network— ANNs, for short — were invented in order to understand and emulate the functions of the mammalian brain. But, in recent years, they have taken on a life of their own.

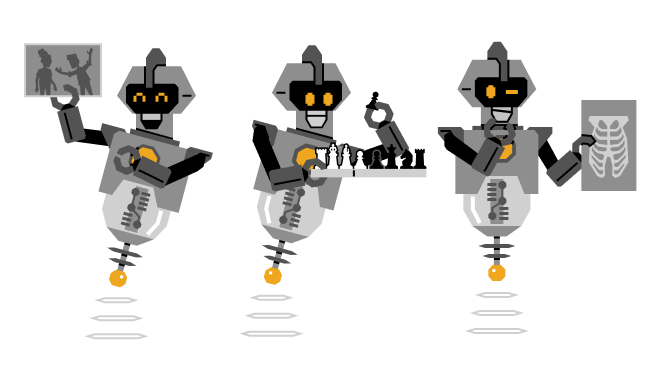

Computers today can follow billions of individual instructions each second. This explosion in computing power has let scientists build ANNs that learn to identify people in images, play chess, and even help doctors make medical diagnoses.

In other words, ANNs learn to do complex tasks that previously only humans could do. At the end of this lesson, you'll have an opportunity to play with an ANN that we've taught to see, but it will make more sense to get acquainted with the basics of how ANNs work first.

Artificial Neurons

Just as biological neurons are the basic units of the brain, artificial neurons are the computational building blocks of an artificial neural network (ANN). And, like biological neurons, artificial neurons respond to the information that's presented to them.

They can use this information to make predictions. Let's see an example.

This is Chester. He's a pretty easygoing cat.

He likes when his human scratches his back, his chin, his belly — pretty much anywhere.

This is an ANN with four inputs and one artificial neuron. It predicts how satisfied Chester will be when his human gives him attention:

The inputs to this neuron are labeled with the places where Chester's human can rub him. Switching an input to on means Chester will get a rub there. The artificial neuron labeled with a Chester icon displays his predicted response. Try flipping a few of the inputs to on and notice how the artificial neuron's appearance changes.

Turning some of the inputs on changes how full the Chester neuron is. The fuller it is, the happier we can expect Chester to be after receiving rubs for a few minutes in all of the places corresponding to inputs that are switched on.

When the neuron is entirely black, the neural net predicts that Chester starts to purr.

This neural net predicts that Chester will purr if you rub his back, chin, and belly all at the same time. But if you scratch his ears, he's not amused.

Switching on different combinations of inputs produces different responses from the neuron.

NOTE

When the neuron is entirely black, we say it is fully activated by the inputs. If the neuron is empty, we say it is completely inactive.

For most input combos, Chester's neuron is somewhere between fully activated and completely inactive.

This example shows us how an artificial neuron can make a prediction. Next, we'll look at an ANN with a slightly different configuration to see how differences can change the overall prediction.

Positive and Negative Inputs

This is Lester.

He's a handful. Sometimes he eats trash and makes himself sick.

This is an ANN that predicts what kind of attention Lester likes today:

Use the neuron to decide how to rub Lester to make him happy. Can you find a combination of inputs that fully activates the neuron to make him purr?

Pretty much any part you try to rub will irritate Lester — the black fill on the neuron decreases. The one exception is if you rub his ears, but the response isn't strong enough to make him purr.

You probably noticed the lines connecting the inputs to the neuron have two different colors. Switching on inputs that have a pink connection has a different effect than switching on the input with the green connection.

The difference in color helps you tell how a neuron will be affected by its inputs.

NOTE

The green connections tend to activate the neuron, so we say they are positive. The pink connections tend to deactivate the neuron, so we say they are negative.

On a different day, Lester's mood is predicted by a neuron with a slightly different configuration:

Notice that when none of the inputs to the neuron are on, the neuron is half-filled. Lester is completely indifferent — neither happy nor irritated — but he can also be indifferent when you're rubbing him.

The neuron predicts Lester will be indifferent to quite a few combinations. We've seen that any pair of one green and one pink input will have no net effect if they're on together, just as −1+1=0. −1+1=0.

There's a lot more to learn about artificial neurons, but now that we have the basics, let's see what we can get a larger ANN to do.

Layered Networks

So far, we've seen a few small ANNs that make predictions about whether one cat or another is happy. But how do we make ANNs that do more complex tasks — like predict the prices of stocks or recognize faces in photos? After all, that's what you came here for, right?

ANNs with a single neuron — like the ones we've seen so far — have limited predictive power. However, things start to get more interesting when you add more neurons. Let's see an example with… — you guessed it —

…another cat.

This is Jester. He's got a big heart, but he's also got a lot of energy — especially in rooms with drapes.

Here's another ANN that makes rub-related predictions but reflects Jester's more complex rub-related preferences.

Notice that this ANN has four neurons and six inputs. There are three neurons in the middle layer of this network. At the top, the Jester neuron displays his predicted response to rubs.

Does the Jester neuron at the top of this ANN respond to flipping on the inputs along the bottom?

The inputs indirectly control the state of Jester's neuron. The three neurons in the middle layer are the inputs to the Jester neuron at the top.

The middle-layer neurons are controlled directly by the inputs

Let's use this ANN to figure out what kinds of rubs Jester likes. To fully activate the Jester neuron, it helps to think about which of these three neurons need to be on.

Now that you know which neuron in the middle layer should be most active, try setting the inputs to the three neurons in the middle. Can you get Jester to purr by fully activating the Jester neuron? Remember that being fully activated means that the neuron is completely filled up.

Jester is kind of a flexible cat. He likes rubs on his back, belly, and rump. But he'll also be equally happy with rubs on his back, belly, and neck — or his back, belly, neck, and rump.

This kind of flexibility is not possible in an ANN with just one neuron.

The predictive power of neural networks is derived from processing information through layers of neurons. The three middle neurons in Jester's ANN are a small example of a layer. What do you think an ANN with 25 neurons in a layer can do? Let's take a look.

Seeing Digits

A few principles have emerged from our exploration with cats: ANNs make predictions based on their inputs. Neurons follow rules mechanically to compute their outputs.

By stacking neurons in layers, we create the potential to make increasingly complex predictions — as long as we can find the right connections between the layers.

The complexity of an ANN's predictions increases with the number of connections. For example, here's an ANN with 50 neurons in two layers that has been trained to recognize the digits 0 through 9

Instead of a handful of inputs, it has a field of 400 pixels for its inputs. As you feed digits to this ANN by drawing them in the pixel field, keep in mind this particular ANN expects the digits to be roughly centered in the field and to have a height close to the height of the field — in other words, draw big.

Can you find any digits that the ANN can't recognize very well?

You may have noticed that the field of pixel inputs doesn't appear to be connected to the layers of neurons by pink and green connections. In fact, each of the 25 neurons in the first layer is receiving information from all 400 pixels. We don't show you all of the 400×25=10 000 connections between the pixel field and the first layer — there are just too many.

We do display the connections between the other layers. By the look of them, you can probably tell that they were not set by hand. This ANN learned to recognize digits, and the positive and negative connections you can see were set over a long period of Training.

By the end of this course, you'll understand what this ANN is computing when it is presented with an image of a digit and how an ANN like this is trained.

It's also important to keep in mind what you won't learn in this course. You won't learn how to design artificial intelligence that can replace human ingenuity. As capable as it is, the digit-recognizing network isn't thinking. It is a mathematical waterfall. Inputs cascade through layers of calculation to end up at an output — a guess for what digit is drawn.

Just because an artificial neural network can learn doesn't mean that it thinks. Later in this chapter, you'll help to design a mechanical computer consisting of about 300 matchboxes that can learn to play tic-tac-toe. No one would argue that a pile of boxes can think.

But before we get to that, we'll take a closer look at the problem of computer vision in the next lesson.